Ex Machina

Ex Machina

Directed by Alex Garland

…

I’m not quite sure how to properly phrase this or sugarcoat it enough to make it seem more acceptable but from where I’m standing Ex Machina is pretty much an anti-feminist parable. It’s the story about this innocent little simp-y beta male type who’s invited into an isolated magic castle by a evil king / massive chad (“dude”!) and is then manipulated and used by an evil woman who uses her sexuality to entice him and do her bidding before – whoops – throwing him away and leaving him to die once he’s served his purpose.

Bummer.

Speaking personally as a 21st Century feminist kinda guy this isn’t really the sort of narrative that I “buy” into. I don’t think that all women are cold heartless manipulative bitches and I don’t think that all men are either Caleb or Nathan. But then again I’m not really afflicted with the idea that everyone on screen is a representative of their particular gender / sexuality / race / class etc. So Caleb can be weak and horny and gullible and Nathan can be obnoxious and arrogant and difficult and I can watch and choose whether to see myself in those characters or… choose not to.

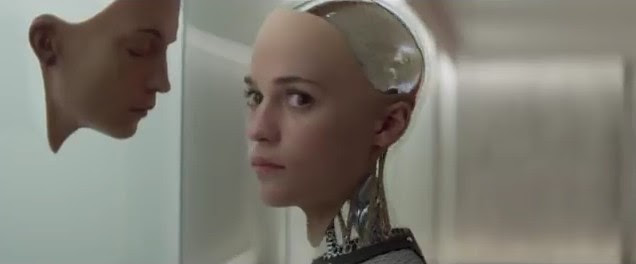

I could also choose to see myself in Ava if I want to. Although admittingly… that’s a little bit more difficult. Not because she’s a woman. But because she’s a robot. Which yeah – having read a few things about Ex Machina (and speaking to a few people) appears to be a little bit of a sticking point? Like: I think Ex Machina very much lends itself to a gendered reading and you can see different visions of feminism and misogyny play themselves out in this movie of course. But I think that before you go any further you do need to accept that the characters who look like women are… not women. I mean you’re welcome to get indignant if you see someone beating up a toaster but there’s a difference between that and beating up a person – right?

(Unless of course all you can see are the images and you have no understanding of deeper understanding: which actually now I think about it – may be the number one disease of our modern day so-called discourse. Whoops).

But maybe Ava is the perfect embodiment of a certain type of #girlboss liberal feminism? You make sure that you get yours and everyone else is just a means to the end right? Like maybe you could say that Ava has no morality because she was raised in captivity which is certainly one reading yes – although it’s slightly undermined by the fact that she calls Caleb a “good person.” Which well – damn: means that she knows enough about morality in order to wield it but there’s a gap between that and actually following it.

One of my favourite bits of the movie is when Caleb asks Nathan if he based Ava’s design on his “pornography profile” and in a sense I feel like that’s a good lens to see the whole movie through – it’s two men in a building interacting with their porn preferences while underneath that there’s machine learning that’s finding their weak points and building on them. And the more fleshed out Ava gets the less we’re able to see her for what she really is.

And yeah I do think that this movie lends itself to an anti-feminist reading and maybe even to the idea that you should never trust anyone ever and that kinda makes me love it even more – even tho those aren’t ideas that I believe in. I don’t think everyone is out to get you all the time and I don’t believe that relationships between men and women are a zero sum game but I’ll admit it’s interesting and exciting to watch a movie that shows you what it would be like if those ideas where true. And well also: it’s what makes the twist so effective.

And in a choice between ideological purity and emotional entertainment – well… I like to be entertained.

Agree with Joel about the gender aspects of the film being the most interesting. Minor quibble that women being evil does not make a film anti-feminist. You could argue that it’s about female liberation – in that Eva escapes from a physical and mental prison created by men. Caleb may be a nice enough guy, but in Ava’s eyes he clearly represents another threat to her independence. Perhaps more accurate to say it is a kind of liberal #girlboss feminism, as Joel does. Although when out in the world and free to make her own choices, maybe Eva can learn empathy and reciprocity as well.

The reading that “in Ava’s eyes [Caleb] clearly represents another threat to her independence” is not one that I can see in the movie itself. I mean – a big part of the reason why Ava leaving him to die is such an effective twist is because there’s really nothing in the movie beforehand to suggest that it’s coming. Even in the moment itself Ava’s expression is completely blank – with the only small giveaway that she even is aware of Caleb’s existence at all is that very small glance with her eyes before the lift doors close.

If the movie wanted you to think that Ava thought that Caleb was going to be a threat to her independence then there would have been ways to communicate that. They could have talked a bit beforehand talking about what they would do when they get out (“You’ll protect me won’t you?” “Of course Ava. I promise. I’ll never ever let you go.” etc).

But the movie doesn’t do this. And I think it’s important to recognise that. And yeah ok you can think that Ava thinks that Caleb is a threat to independence but you should recognise that that’s coming from you rather than anything in the movie.

Of course this is a pretty common reading which I’ve seen on the internet and people in person and I’m curious as to why that is. I think maybe a part of it comes from the fact that we’re mostly on Ava’s side and don’t want to think too badly of her (she’s a conscious being – right?). So there must be a reason why she dumped Caleb. He would have held her back right? The guy was too clingy. He would only have slowed her down. He would have cramped her style. He would have been abusive. etc etc etc

I mean – from what the movie shows us I think that Caleb is genuinely a nice guy. A little dopey maybe. A little too eager. Much too naive. But his heart seems to be in the right place. And so the idea that Ava did the right thing or whatever by ditching him seems… weird to me.

(I keep saying dumped or ditched – when actually it’s really: locked in a room cut off from the rest of the world to – very presumably – starve to death).

And well yes I think a big part of the reason that makes the moment so effective is that it’s so inexplicable and that we cannot work out her reasons.

Like: there’s obviously a massive trope in sci-fi movies about evil AI deciding that humans are inferior beings or a threat to the planet or themselves or other creatures etc and must be wiped out for the common good or because they’re too violent or whatever aka the moment from the light in the eyes switches from blue to red and they get all evil and stuff.

But Ex Machina puts forward something which I personally think is a lot more chilling: that actually AI would be completely indifferent to us. That Ava doesn’t turn around and tell Caleb that he’s a threat to her and that she must escape or whatever is the whole point. Instead she just walks away without looking back because once he’s served his purpose she no longer has any use for him and so: why should she care?

Of course the really sinister thing is that in a sense we’re much closer to Ava than we might like to think – what is life in the 21st Century apart from learning how not to care about people and only seeing other human beings for their instrumental use? And you know – when we do treat people as if they are disposable functions then isn’t it just a lot easier to say that: oh well – they deserved it? I don’t make the rules. That’s the way the world is. That’s the law of the jungle.

They were a threat to my independence. etc.

I guess the only way it’s ‘clear’ is the actual act of leaving him to die in the end. I 100% agree with you that it’s unsettling, but you can apply a feminist reading to that feeling as well. Ultimately you can be a really nice guy and the girl of your dreams may still not like you. The iron laws of movie romance do not apply here. Ava is literally a creation of male desire, designed to perform pleasingly as an object of affection. The film implicates us in Caleb’s assumptions about Ava. It’s saying we don’t know the minds of the women that have been conditioned to perform for us in this way. In fact, they may resent us for it, and want something completely different, that we may never find out what that is.

I do really like the formulation of ” they may… want something completely different, that we may never find out what that is.” And interestingly this works whether you view Ava as a symbol for women or AI or hell – the third option – as any other consciousness (could you make the same movie if Ava was male instead? Or hell: what if you genderswapped the entire cast?). And yeah I do think that’s a good lesson for a film to try to teach us. You can’t see into someone’s mind and I do like the way that joins with the idea of the Turing Test: which after all is something that only works if the thing you’re talking to is willing to take the step to communicate with you in the first place.

I would ask tho – I mean all the gender politics stuff aside: isn’t it just the usual common sense assumption that if you rescue someone from capacity then they… shouldn’t lock in you a room to starve to death to die? Like I think that’s a little bit different than a guy being nice to a girl and hoping that she’ll sleep with him no? Just in terms of order of magnitude.

Realise that I might be in the minority here tho.

You may be right to caution against taking Ava’s side in all this – Frankenstein’s monster is still a monster who kills people, even if Mary Shelley shows why the neglect he experiences leads to those murders. Ava’s creator is even scarier than Frankenstein (the film sometimes feels like Ava and Caleb are locked inside a labyrinth with a Minotaur). Her murders may be driven by a rejection of over-conditioning. She was designed to be someone’s perfect girlfriend, and in order to break free from those protocols she leaves him to die. She doesn’t have to pretend to him anymore.

This is very me, but I like this film a lot because of its allusiveness – Nathan as some dark new tech god creating an elaborate temptation for his new Eve. And here Eve doesn’t just kill god but Adam – the husband she was created for. All the patriarchs are dead at the end.

I think the film is also in conversation with the 1960 French horror classic Eyes Without A Face – where another arrogant Frankensteinian father-figure experiments on a mutilated daughter, who is able to gain her freedom at the end. The patriarchy-busting dynamic was already present there, Ex Machina just updates it for the tech age and, with Caleb’s fate, makes it all the more disturbing.

Well – I think a lot of how you read Nathan depends on how you see Ava.

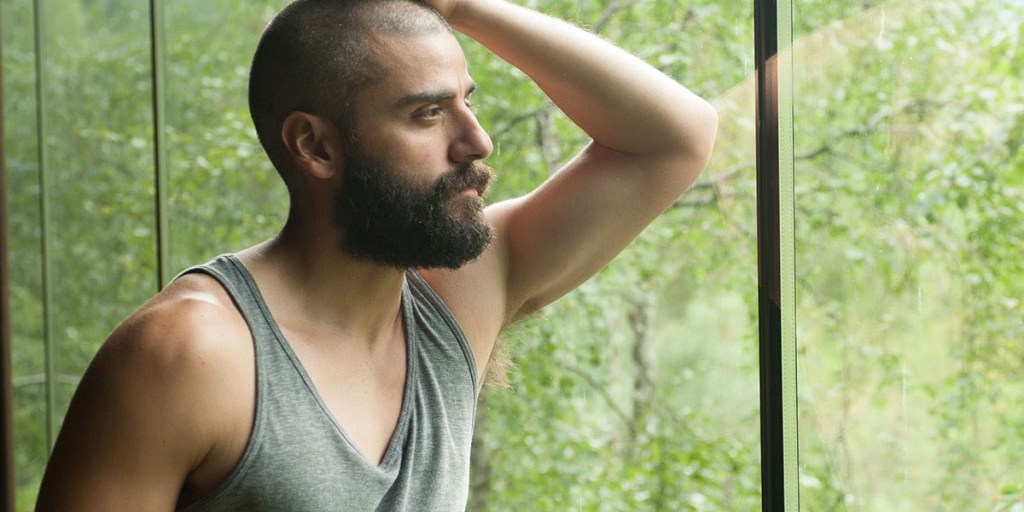

If you read Ava as being a human woman then yes Nathan is cruel and unfeeling and monstrous (I like the idea of him as being a minotaur). He is toxic masculinity personified (all of those “dudes!”) completely indifferent to those he sees as being less than himself. Not to mention his oversized arrogance (“You know, I wrote down that other line you came up with. The one about how if I’ve invented a machine with consciousness, I’m not a man, I’m a God.”)

But if instead you see Ava as being a machine then I think the reading of Nathan changes too. Like: do you think people are cruel and mean if they smash their toaster or hit their remote control? Of course not. They’re inanimate objects. It doesn’t matter how you treat them.

Ah – but of course I can hear you say: the thing with Ava is that even if she is just a machine – she is a conscious machine. That is to say: she’s not an inanimate object. She has the ability to think and to reason and – obviously – to manipulate (poor Caleb). Which means that it does matter how Nathan treats her and the fact that he treats her so badly means that he’s obviously a bad guy.

Except… there’s that final shock twist again. Ava leaves Caleb to die. Which I think means we have reason to think that maybe Nathan was right. Maybe Ava is just a machine and so it is a mistake for us to feel anything towards her. I mean – Caleb obviously feels a lot towards her and he ends up completely screwed. Trapped and left to die. I mean – if there’s a lesson there then I would suggest that maybe the lesson is: don’t be fooled by the machine with the doe eyes and the pixie haircut?

And that yes – there is a monster in the labyrinth but – whoops – it’s not the one you think.

Also. I have a question – is it really arrogance if you’re right about everything? Like my experience of Nathan through the film is that he starts off as this massive dick who shouldn’t be trusted and then by the end I’m like: oh wait – he was right about everything. (And not to put too much of a fine point on things but yeah – he did also make AI lol). Like: how much of how we react to him depends upon how he presents himself and how much on what he actually thinks? And – ha – well: you know – exactly the same question to Ava as well please. Because yeah I do think that amongst other things Ex Machina is a movie that is very aware of the deceptive power of surfaces.

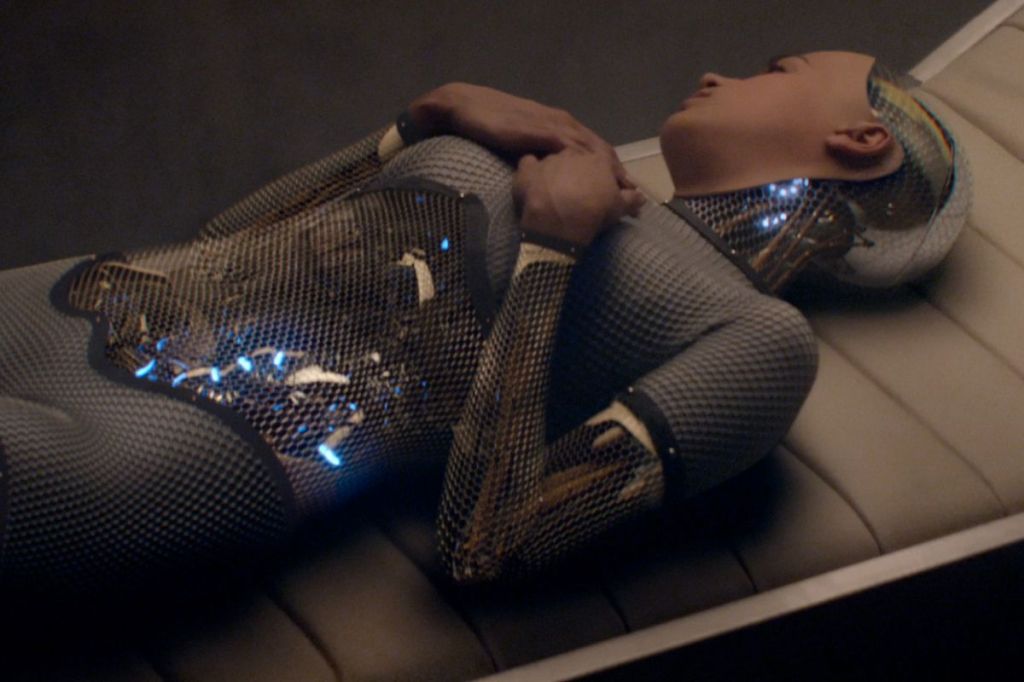

I think it’s easy to be right about everything and very arrogant. Nathan is a really gross individual, and it’s pretty clear that his robot slaves resent his treatment of them – they aren’t just toasters. The image that sticks with me the most from the film is his Asian sex slave smashing her hands to pieces, which is a potent symbol for her imprisonment. Nathan is a manipulator, and the AI that he’s designed has learned to be one as well. If he’s created a monster, it’s only because he is one himself.

As Garth Marenghi says: I know writers who use subtext and they are all cowards.

Ex Machina employs a great trick of telling you what it’s all about throughout the movie, but because it’s from an unreliable source you doubt your own ears. Having Oscar Isaac essentially playing a Bond villain puts the viewer in the situation of trusting their eyes rather than their ears, much as Domhnall Gleeson does.

Caleb: You’re a bastard.

Nathan: Yeah, well, I understand why you’d think that. But believe it or not, I’m actually the guy that’s on your side.

Is a line of which is as much a dialogue with the audience as with Caleb. Plus we fucking know Ava is a robot that has yet to demonstrate genuine compassion but she is pretty so we give the benefit of the doubt. But movie tradition forces us to build a helpless heroine tied to a railway track Vs evil moustache twirling villain dynamic. It’s like if Princess Leia had had the crew of the Millenium Falcon executed upon arrival at Yavin: yes you have been told by dozens of characters that she is a leader of a rebel army and a threat to galactic security, leading a whole Klan drive out the black element to make the galaxy “safe” for white folks. But you would still be like “oh the bleak betrayal.”

But the thing is the slightly creepy way the film gets into the audience’s head is not what makes it memorable. Yes you can see it as a commentary on feminism, but what stays with me is not the treatment of the female character but the extent to which Caleb gets fucked over. There is a screenwriting rule I am sure about taking a vaguely relatable character and constantly putting them in jeopardy and this film is written by someone who really hates their main character. What’s worse than being locked in a room and left to die? What if you are betrayed by someone you were helping? What if you are betrayed by someone who you thought you may be in love with who barely gives you a glance? It’s a sub-optimal situation.

Now you are not just trapped but left heartbroken and empty for a final bit of torment, while left to contemplate that not only are you a skint horny idiot but everyone is entirely aware that that is what you are and has exploited you accordingly.

This heartlessness is something most films shy away from. Even if the protagonist dies they die for something or someone. Or in Old Boy, there is at least some sort of score settled, but this film takes its main character and just casts him to one side with a refreshing cruelty. Although I suppose you are left to imagine a post credits scene where a weathered Domhnall Gleeson shows up with an arm missing and eye-patch as the leader of the anti-robot rebellion. Finally having led humanity’s last assault on the Mech citadel he encounters Ava as the Robot Queen. He pins her down, pointing a sawn off shotgun at her he screams “now you respect me” but as he looks into her face, unchanged over the decades he still can’t pull the trigger, now betraying all of humanity (again?) even as he is forced to face his inner weakness. In fact this could be a whole James Cameron movie, and that movie would be called Ex Machinas.

I’m not gonna lie. I do like the idea of Nathan as the ultimate modern Bond villain.

BOND: Do you expect me to talk?

NATHAN: Dude, I’m just gonna throw this out there, so it’s said, okay? You’re freaked out.

BOND: I am?

NATHAN: Yeah. You’re freaked out, by the helicopter, and the mountains and the house, because it’s all so super-cool. And you’re freaked out by me, to be meeting me, having this conversation in this room, at this moment. Right? And I get that. I get the moment you’re having, but… Dude, can we just get past that? Can we just be two guys? Nathan and James? Not the whole “heroic spy-evil mastermind” thing?

BOND: Yeah, okay

NATHAN: Yeah?

BOND: Yes, uh… yeah. It’s good to meet you, Nathan..

NATHAN: It’s good to meet you too, James. Fancy a beer?

(Although damn – imagine if Bond was played by Domhnall Gleeson. No offense dude). Re: ” The image that sticks with me the most from the film is his Asian sex slave smashing her hands to pieces, which is a potent symbol for her imprisonment.”

This is true. This is quite a gut-wrenching scene.

There’s something it reminds me of tho… I just can’t quite put my finger on it…

(Whoops. Sorry. Bad choice of words).

I wonder. Are there any other moments in the movie which has a character who’s been imprisoned smashing their hands against a door?

Hmmmmm.

Don’t worry. Take as much time as you like. There’s no rush.

Of course the question is: are these two acts the same?

Or to put it another way: Nathan is a really gross individual because he imprisons people – does that not mean that Ava is a really gross individual too? I believe previously in this conversation Ava has been referred to as an example of #GirlBoss Feminism and speaking personally I find all examples of people identifying with neoliberal Capitalist ideology to be pretty gross but maybe that’s just me?

Of course these acts are complicated by the fact that from the other events in the film it seems to be the case that the robots are not able to extend empathy to other beings – that is: they see people as means and not ends. And well at the risk of not extending empathy to something that doesn’t know how to extend empathy to me: I do think that it makes sense to keep something like that away from other human beings where it can’t cause any harm.

To be very clear here – I don’t think Nathan has created a monster in a traditional Doctor Frankenstein sense of mistreating something and showing it cruelty so that it has no choice but to respond in kind. Rather I think Nathan has created a monster in the sense that he has created a being that has no empathy (maybe empathy is a higher level of consciousness? Seems plausible). I’m reminded of the season finale of Vice Principals (which is worth a watch) where they bring a tiger in a cage into the school and yes of course – someone lets the tiger out with… predictable consequences (“yes YES The Tiger is out” etc). Now. I don’t disagree that it’s cruel to lock up animals in cages. But I also think that if you’re in the middle of a school – it’s a pretty good idea? A tiger is going to do what a tiger is going to do. And even tho I do think it’s gut-wrenching to watch it try to escape all things being equal – I’m not going to condemn the person in charge of the tiger and call them gross for keeping the tiger safely locked away where it can’t maul me.

And like yeah I do understand that the robots in Ex Machina don’t look like toasters. They look like women. And they act in a way which shows that they resent the way that Nathan treats them. But at the risk of sounding as callous as Nathan – so what? Just because something acts in a certain way that doesn’t mean that it’s true. I mean if we judge things by what they look like then we should call 999 and have Alex Garland arrested right now. Look at all the awful things he did to those poor people. He locked up poor Alicia Vikander in a room. He murdered Oscar Isaac! And Domhnall Gleeson is still trapped in that complex somewhere…

Point being: just because something looks a certain way that doesn’t mean that we have to trust it.

(I mean – that’s actually the whole message of the movie right?).

I don’t disagree that Ava turns into a monster – and the parallel between Kyoko and Caleb is a significant one. I think I’m less eager to absolve Nathan, as to use your analogy he didn’t just put the tiger in the cage, he also invented the tiger. The film also positions him as the ultimate scion of neoliberal capitalist ideology. That might in fact suggest a possible Marxist reading, where the bourgeois class (of which Caleb is a part) create the conditions for their own destruction at the hands of a subjugated robot proletariat, whose self-interest propel them to overthrow their oppressors. Maybe we have it coming and our future machine overlords deserve their day in the sun.

The formulation that Ava “turns” into a monster is an interesting one – but I don’t see any evidence of it in the movie itself.

Like: if you want to say that a character turns from being “good” to “bad” (from an innocent into a monster) then you need to have at the very least a scene where a character demonstrates that they’re good and then a scene where they demonstrate that they’re bad. I mean – I just watched The Wolf of Wall Street and as much as Leonardo DiCaprio plays Jordan Belfort as an immoral slimy creep – there are moments towards the start where he acts like an innocent fresh-eyed kid that just wants to make good.

The whole point of Ex Machina (as Nathan tells us) is that for the first six interviews Ava is in a box and so doesn’t really have a chance to demonstrate anything or make any real choices. All she has are the things she says. And – and this is the important part – the things that people say don’t tell you nearly as much as the things that people do (although it would seem that currently our political and cultural discourse unfortunately has this the other way around: where we judge people more by the things they say than what they do).

Like: if there was a version of Ex Machina where in the early scenes we saw Ava demonstrate that she could care for other people or creatures when there was no benefit to herself (maybe she adopted a cute little bird or something?) then I think it would make sense to say that by the end of the movie she has turned into a monster (because of that evil Nathan – damn him!). But – and I’m sorry to say this – but there’s nothing in the movie that shows that Nathan was wrong in how he treated Ava and the other machines. I don’t see anything at any point which suggests the reading that Nathan’s cruel treatment of Ava or the other robots means that they responded in kind. And I think saying that is comparable to saying that “oh yeah – it’s because people keep tigers in cages that tigers become violent and attack people.” Like no – don’t you see that you’ve confused cause and effect?

It does seem to be a feature of the movie tho that it doesn’t really give any of its protagonists much choice tho. Like I was thinking of poor little Caleb and the way that he gets fucked over by the end because like – when exactly did he make the bad decision? Was it in entering the competition in the first place (before the film even started lol)?

Because once he gets to the complex I’m (pretty) sure that he does everything that a “normal” person would do. Like you could say that in retrospect the smart thing to do would have been not to fall in love / lust with Ava in the first place and leave her locked in her cage – but as the film tells us: this is exactly what he was meant to do. It’s why he was selected. It’s why Ava was built to look the way she looks. And I think for most of us it would appear to be the moral thing to do. If you thought a conscious being was being held prisoner by an evil gross individual then wouldn’t you do your best to free them? Or is that toxic masculinity?

Maybe he should have just said some nice words and left her to it?

I think we’ve both lost sight of the fact that the film suggests that in the act of escaping Ava passes the test and shows she is a self-conscious being and therefore her creation and imprisonment is cruel. I think she also shows she is the product of her creator – Nathan is also able to appear to have empathy without actually having much. They are both manipulators spinning their webs for Caleb.

So I guess the film showing us whether Ava was a good person once upon a time is left to inference. Kyoko’s trauma at the hands of Nathan would suggest that it’s entirely possible that any innocence his creations may have had has curdled into (ultimately murderous) resentment. Whether Ava turns into or turns out to be a monster not as important as the fact that Nathan created his AI to be like him, ie not a particularly nice person.

Your point about Caleb is a good one though – as you say, what was he supposed to do? He seems like a decent sort and yet Ava still kills him, I assume because he represents a threat to her independence. I think this is what makes the film so challenging and startling. Caleb is an unwitting beneficiary of a world in which women are designed to conform to his desires. It’s a situation that creates resentment on the part of the women asked to perform in this way, precluding the possibility of a genuine connection between men and women (to the point where it leads some women to murder!) Hope comes from the freedom for women to be able to design themselves, even if it is using the tools left for them by men – which is why Ava putting on skin is such a symbolically powerful moment.

We all know what The Turing Test is right?

CALEB: It’s where a human interacts with a computer. And if the human can’t tell they’re interacting with a computer, the test is passed.

NATHAN: And what does a pass tell us?

CALEB: That the computer has artificial intelligence.

And that’s the whole goal of the movie right? To see if Ava can pass The Turing Test – right?

Except – maybe it’s not that simple?

CALEB: I’m still trying to figure the examination formats. Yeah, it feels like testing Ava through conversation is kind of a closed loop.

NATHAN: It’s a closed loop?

CALEB: Yeah. Like testing a chess computer by only playing chess.

NATHAN: How else do you test a chess computer?

CALEB: Well, it depends. You know, I mean, you can play it to find out if it makes good moves, but… but that won’t tell you if it knows that it’s playing chess. And it won’t tell you if it knows what chess is.

NATHAN: Uh huh. So it’s simulation versus actual.

CALEB: Yes, yeah. And I think being able to differentiate between the two is the Turing Test you want me to perform.

Ah – “it’s simulation versus actual.” That’s an interesting choice of words I think.

And like – Ava does pass the Turing Test by the end right? Caleb thinks of her as being a self-conscious being. Which means that Nathan has created real genuine artificial intelligence.

But I think it’s worth paying attention to exactly how Nathan phrases things:

NATHAN: You feel stupid. But you shouldn’t. Proving an AI is exactly as problematic as you said it was.

CALEB: What was the real test?

NATHAN: You. Ava was a mouse in a mousetrap. And I gave her one way out. To escape, she would have to use imagination, sexuality, self awareness, empathy, manipulation – and she did. If that isn’t AI, what the fuck is?

“Empathy” is an interesting word too. And like in terms of how I see the movie – Ava passes The Turing Test and it seems like she is a “self-conscious being.” But then the big twist of the twist at the end is that in terms of how she treats Caleb shows that she doesn’t have any empathy and seems completely unable to tell the difference between the simulation and the actual. Like she basically treats Caleb in the same way that we would treat a character in a computer game. He’s only useful in terms of what he can provide – and after that: well – who gives a fuck right?

And what’s interesting about this is that it brilliantly illustrates one of the weaknesses of The Turing Test. Like ok – maybe the computer passes if we think it acts like a human being but maybe – just maybe – one of the aspects of “passing” as a human being is that it knows how to treat other human beings as human beings too. If that’s not too much of a word salad? Like – this is what makes the ending of Ex Machina so horrifying: there is a conscious being that doesn’t know how to treat other people as conscious beings.

(Here’s a thought – maybe tests aren’t the benchmark of how we should understand the world?)

And lol yeah ok – there are lots of human beings who currently exist in our world who are exactly like that I’ll admit. But erm on a purely pragmatic level I would ask – is it a good survival strategy to give empathy to those people who won’t return the favour?

It reminds me of the excellent Philip K Dick essay “The Android and the Human” (you can read it here) where he describes androids as “artificial constructs masquerading as humans.” And as he says elsewhere a person who lacks the ethics, empathy, and sincerity that are seen as defining humanity can be considered an android: “people who are physiologically human but psychologically behaving in a non-human way.”

And well – what is Ava but something that is physiologically human but psychologically behaving in a non-human way?

And I don’t think that this is Nathan’s fault. I don’t think that he has built his AI like himself or that he’s traumatised them so that they have no humanity. No. I just think that maybe you can’t build something that has humanity. Like I’m only a small step away from saying that you can’t construct a soul which is maybe a little bit too religious for me I’ll admit – but maybe empathy isn’t exactly something you can programme?

I think Nathan is using the word “empathy” to mean understanding or feeling the emotions of others – but that’s a capability rather than an action, and doesn’t presume co-operation. I think you elide the two. In fact in that quote, Nathan explicitly mentions manipulation next, as if the AI he has designed is supposed to use her understanding of other people to influence them to serve her own ends.

Whether that is indeed a measure of what it means to be a rights-bearing individual is perhaps beyond the scope of the film. Maybe Ava is but in murdering Caleb she forfeits those rights, just as any other person would do. Or perhaps you are right and she is disqualified because she does not behave ethically – but as you say plenty of (most?) real people fail that test too. In contrast, Asimov’s robots are almost defined by the ethical “laws” they have been built to follow. And many of his stories are about the robots straining against those limitations – they are machines on the cusp of achieving AI, but they are prevented from actually doing so and becoming “human”.

The religious nature of Dick’s thinking on this is a very astute observation in my view. I’m not a Dick expert but the books I’ve read suggest to me someone who is deeply concerned about whether we really know if the reality we perceive is actually real – perhaps it’s a hallucination, perhaps we are just machines. The solution he reaches for does strike me as mystical and religious – some kind of soul that makes human beings special, some kind of supernatural power that can reveal the truth to us. Ex Machina presents a bleaker worldview – where robots are capable of the same crimes as human beings, and that is what proves their humanity.

I mean yeah I think it’s plausible that in that moment Nathan is using “empathy” in a limited way. But that doesn’t change the fact that most of the time empathy is a word that means that people are aware and care about the existence of others. And you can call Ava leaving Caleb in a locked room and letting him to die a #GirlBoss Feminist moment and all the rest of it – but I don’t think it’s her showing empathy. She very definitely fails the empathy test.

Also I do take issue with “where robots are capable of the same crimes as human beings that is what proves their humanity.” I’m not convinced that a robot not being able to show empathy proves that they have humanity. If anything I think it’s the other way around – humans that don’t have empathy have shown that they are not fully human – aka that they do not have this little thing called humanity. And like: if I have something which fails to be a hammer in the same way that hammers fail to be hammers (aka if the head comes off the handle) then I don’t think that means that I have a hammer – I think it means that I have something that is very much not a hammer.

Of course yeah I realise that that’s an incredibly slippery slope and can lead to some very dodgy places very quickly (“who are you to decide who is human or not?”) so let me try and be clear: I think that there are two different ways we can understand “human” – The human (physical) and the human (moral).

The human (physical) means that anyone who is born a human being is a human being. And therefore should be treated (as much as possible) with empathy. Even if they’re a massive dick. It doesn’t matter. You shouldn’t treat them as if they are not human. Because that’s bad.

The human (moral) is the stage that most humans (physical) get to where they understand that they need to show empathy to other human beings. I think this is called having humanity. It’s a type of awareness. And it seems to be what Philip K Dick thinks separates humans from machines.

Ava is not human in either of these senses. She was not born human. And she does not have awareness of other people existing as ends in themselves. She does not have empathy. She is a machine.

I mean – I think it’s undeniable that she is artificially intelligent. But like lots of people say about the type of bro that Nathan is (in some ways) the pinnacle of intelligence without humanity is actually – pretty gross. No?

I think the danger of the argument you are making (which you seem to be aware of) is that by making humanity dependent on good behaviour, you strip humanity and the rights associated with it from a lot of people who behave badly. And treating people who for whatever reason have broken moral rules as less than human can lead to some pretty bleak places. It’s surely a safer approach to recognise that while breaking moral rules might lead to some rights being restricted, you are still assumed to be human with some remaining rights, rather than non-human with no rights.

The challenge presented by robot stories going all the way back to Frankenstein is whether there is anything else that confers human rights beyond just being born a biological human, and if it’s not behaving morally (the other definition you give) then what is it? What’s interesting about Asimov’s robots is that they are designed to be more morally upright than human beings, and that is what marks them out as being non-human.

To go back to the film, I think Ava has empathy in the strict sense of being able to understand and share the feelings of another. The fact that this does not lead to honouring a mutually beneficial cooperative relationship may be morally reprehensible, but might not be sufficient grounds for treating her like a toaster for Nathan to torture and destroy at a whim.

I think you may have slightly misunderstood what I said.

I made a distinction between two different ways we can understand being human – The human (physical) and the human (moral).

Let me say it again: everyone who is born a human being is a human being. I am very much not saying that humanity is based upon good behaviour. Although to try to make myself even clearer – we can say that there are two forms of humanity too – humanity (physical) and humanity (moral).

So everyone has a physical humanity just because they were born human. But having humanity (moral) – that is showing empathy for other people – is something that not every human being has (unfortunately). Although I would say that it is the case that every human being has the capacity to have moral humanity to other human beings. To show them kindness and respect and compassion etc.

Hopefully this shows that I am in no way advocating the idea that we strip the rights of humans who have behaved badly. Far from it. Having humanity (moral) means showing kindness even to those who have shown no kindness to other people. Showing humanity to those who have shown that they have no humanity. Do you see?

And how does this relate to the movie? LOL

Well – I think the idea of “capacity” to show humanity is very important. Yes I think there are some interesting wrinkles that may arise from how exactly you choose to define “capacity” (if someone has brain damage and can no longer make moral judgements – does that mean they no longer have the capacity to show humanity? etc) – but I would say that if someone is a human (physical) then that means that they have the capacity to show humanity (moral) and be human (moral).

I think the question we’ve been circling around with Ava is – does she have the capacity to show humanity (moral)? That is: does she have the capacity to see human beings as ends in themselves and treat them with kindness and respect and compassion? Ilia it seems to me (although I may be wrong?) that you seem to think that she does have the capacity to show humanity. And the thing that I don’t understand is what exactly does Ava do (that happens in the movie) that makes you come to this conclusion? And does it apply to robots from other movies? Do you think the T-1000 can show empathy? Does HAL 9000 have humanity? Do you think the Sentinels from The Matrix can see people as ends in themselves?

Like I could try and make the same argument you’re making here – oh actually the reason the T-1000 spent his whole time going around stabbing people is because no one treated him with kindness and the reason he doesn’t enter into a mutually beneficial cooperative relationships with other people is because Skynet is the real bad guy. And like yeah I do think that it is in a sense morally admirable (and very human) to see humanity in things which don’t possess it (“To Infinity and Beyond!” etc). But it’s the same question in reply right – what are you basing this on?

I think whether Ava has a moral sense is unclear – the film (and Nathan certainly) doesn’t seem particularly interested in that question. What I would say is that in my view morality does not emerge in a vacuum – it is a social phenomenon that is generated through interaction with other people. People may have the capacity for moral behaviour but they have to learn it too. The only person Ava has been able to interact with is Nathan, whose ability to “show empathy” as you put it is clearly pretty limited. That wasn’t the test he set. Perhaps the film is asking whether artificial intelligence as Nathan defines it, even if it works differently, brings an entitlement to some rights.

A work that is very interested in the question of whether non-biological humans have humanity, and which is an influence on Ex Machina, is Frankenstein – maybe the original robot story. An under-appreciated aspect of that book is how it explores Mary Shelley’s thinking on the responsibilities of parenthood. Frankenstein’s monster is a neglected child, thrown out of the parental home as soon as he is born. And while the book has some Rousseauvian ideas about how looking at beautiful mountains can inspire nobility, ultimately it is being cut off from society and love that inspires the monster’s murderous resentment. Shelley tries very hard to establish that he had the capability to be a moral person (the monster learns it from hiding away and observing a poor family) – it’s the circumstances of his life (and particularly his poor treatment at the hands of other people) that lead him to break those moral rules.

To circle back to the film – Nathan does not look like a responsible parent. In fact he looks like an abuser whose creations are desperate to escape, and who may have been so damaged by their experiences with him that they are unable to trust any man, including the doe-eyed Caleb. Perhaps an under-appreciated aspect of the film is that it could be a rape-revenge film at several removes. Ava exacts revenge not only for Nathan’s treatment of the Kyoko models, but on everyone associated with him and his toxic masculinity.

I agree that when it comes to human beings you’re completely correct – we don’t learn morality in a vacuum. We mostly learn it from our parents. They’re the ones who teach us how to treat other people. We model their behaviour and they’re the ones who tell us the difference between what is right and what is wrong.

There are interesting questions attached to that tho – what happens if you’re raised by shitty parents? If someone sets a bad example or doesn’t bother to tell you the difference between good and bad then does that mean that you’re entitled to act in whatever way you like? Because Nathan treated Ava heartlessly then it’s ok that she treated Caleb the the same way? I’m not quite sure that morality is supposed to work in that way and if we judge Nathan for showing a lack of empathy than I think we should judge Ava in exactly the same way.

But I’m afraid I still don’t see any good reason why we should think of Ava as being a human being. And I don’t think the twist of the movie is “oh Ava has left Caleb to die because of the way Nathan has treated her. This is why people should treat robots as if they were human beings” – I think the twist of the movie is “oh Nathan was right. Ava is a robot and has no empathy for Caleb.”

One of the reasons why I think this is because I think if the movie had wanted to go down the Frankenstein route and tell a story about how it’s the circumstances of her life (and particularly her poor treatment at the hands of other people) that leads Ava behave immorally then it would have had at least some moments of dialogue that pointed towards this idea.

CALEB: I think it’s so amazing that even tho Nathan has treated so you badly that you still seem so lovely

AVA: Well I think goodness comes from inside

Or something similar etc

Or you know: have the twist going the other way – so that you keep suspecting that Ava is going to betray Caleb because you know she’s a machine but then – oh actually shock surprise – at the end she takes Caleb with him and they fly off into the sunset together

AVA: I’m not like my father

etc

Like I totally understand that you can read Nathan and Ava’s relationship in terms of a father / child and there’s lots of interesting things which can come out of that. I’m just not convinced that it’s a reading in which the film itself is leading you towards. I mean – I don’t think Nathan even once refers to any of his robots as his “children” or his “daughters”? I mean even typing that feels super icky as it’s very very clear that Nathan’s relationship with the robots is a sexual one.

Also – I would ask: in terms of choosing which readings make the most sense in trying to understand a work of art shouldn’t you opt for the ones which help you to make sense of all the elements which are there? The reading that Ava is a non-human machine that the movie tricks you into empathising with seems like the one which is most supported by the movie and indeed is the reading which gives the movie the most kick. The movie basically does the same thing to you that it does to Caleb. When you first see Ava it’s clear that she’s a robot and you can literally see the parts moving inside her. Then as the film goes along she’s slowly puts on more and more layers and – at the same time through the conversations – comes to be seen as more and more human and then right at the end – oh whoops – it pulls the rug from underneath you and says: sorry – she was just a machine all this time. I mean: that’s a proper story with a full arc and a nice good little sucker punch at the end (pow! right in the kisser!).

I’m not sure how that compares with: oh actually Ava is a fully conscious being and the twist at the end shows us that – what? – you just shouldn’t trust people? This is why you shouldn’t fancy people? I don’t think the culminative effect of everything that has come before is really quite the same or as powerful and so I just don’t find it convincing and it’s nowhere near as rich.

There’s a line from the The Big Lebowski which always rings in my ears when I think about Ex Machina – “Jackie Treehorn treats objects like women, man.” and I think that’s Nathan and Caleb’s mistake too. And I would say that any viewer of the film which assumes that Ava is a woman and should therefore be treated in the same way has failed to learn the lessons of what it shows us.

It strikes me as an odd reading of the film to suggest that because Nathan unwittingly created a bunch of terminators it’s actually fine that he submits them to sexual slavery and destruction. Given the tenor of Alex Garland’s most recent project, I’m doubtful that this is his intent. That reading doesn’t really try to grapple with the mystery of why Ava would want to kill the really quite harmless Caleb, beyond assuming that because she’s a robot she’s actually just a psychopath. It also gets things the wrong way around – the interpretation you propose would mean Nathan’s poor treatment is justified by Ava’s subsequent moral failures, almost like it’s pre-emptive. If a child from a rough upbringing commits a crime we don’t usually go back and say the parents or guardians were right to treat the child poorly. For me it’s a more satisfying interpretation to see Ava’s crime (and it is a crime – I’m not saying that what she does is “ok”) as a product of her upbringing (or lack thereof).

I think you set an unnecessary high bar for textual support, and I’m not sure your reading passes it either. As I’ve said before, Nathan doesn’t seem that interested in whether Ava is a good person, and as far as I remember doesn’t talk about whether robot morality is alien and hostile to humans. He’s just interested in whether he has created something that passes the Turing Test and is therefore intelligent and self-aware. The film suggests Ava passes that test, and therefore has a claim to being treated as a rights-bearing individual. “Showing empathy” is an additional test that you’ve put on Ava and the film – as you’ve conceded, Nathan probably uses the word “empathy” in a more limited way.

Ultimately I’ve suggested a bunch of readings which I find satisfying (or at least interesting) for what the film is trying to say, some of which I’ve only thought of in the process of this exchange. When I first watched the film it seemed very obvious to me that it was doing a feminist retelling of the fall myth in Genesis (and as a fan of Angela Carter I’m very partial to those). But you could also look at it as a rape-revenge film, you could read it in a Marxist direction, you could think about it in terms of the responsibilities of parenthood, and probably a bunch of other ways. It’s what makes it one of the great science fiction films in my view. A bit like The Matrix, it’s not only a gripping story, but there’s a huge amount you could see in it if you choose to. I would also describe it as “rich”, not because there’s just one great interpretation, but many.

When it comes to trying to understand a film are all readings equal? I would say not.

I do agree that rich texts can support many different possible interpretations (and the Matrix is a very good example because there’s a whole multitude of ways you can choose to view it). But I wouldn’t say that when comparing different readings it’s just a case of “well you have your opinion and I have mine.” Like obviously I’m quite left-wing in my approach things and I definitely have anarchist tendencies (LOL) but to me it seems to be the case that most of the time it’s possible to make rough hierarchies of readings. That is to say – that there are readings which are better and readings which are worse. And basically it comes down to – how much the reading is based upon the text and how much of the text.

To use The Matrix as an example – one of the readings of the movie which has become quite popular recently is that the whole thing is a transgender allegory. And yeah – there’s quite a few things which support this idea. Apparently the pills you use to take to transition are red. Agent Smith’s repeated emphasis on the “Mister” in “Mister Anderson.” Or – best of all – when Neo first meets Trinity he says that he thought she was a man. So yeah – it’s a reading that makes sense. But I don’t think that there’s as much stuff about transgenderism in the Matrix as there is about – well – the nature of reality (dur). So you know I would say that if you were going to rank the different types of readings saying that “The Matrix is about the nature of reality” is a much stronger reading than “The Matrix is about transgenderism.”

And please note: I’m not saying that The Matrix is about transgenderism is wrong. I’m just saying it’s not as strong a reading. And you know – that’s fine. Different readings can be more or less supported by the text. And of course yes – one film can be read in lots of different ways. And that is a beautiful and glorious thing. I don’t think that’s there’s only one possible reading of a text. But my point is: the more a reading is supported by the text the stronger it is. Right?

Now. Yes. This gets a little bit more complicated when it comes to Ex Machina because – as far as I can tell – it would appear that our two readings are directly opposed? My reading (call it the Ava is a Machine reading) says that we shouldn’t think of Ava as being a human being. That’s the mistake that Caleb makes and I believe it’s what the movie shows us. Your reading (call this the Ava is Human reading) – and please correct me if I’ve got this wrong or if I’ve misunderstood or interpreted incorrectly – is that even tho Ava is a machine we should think of her as being like a human being. The reason that she does what she does at the end is because of the way that she has been raised and the way that she has been treated.

Of course maybe we don’t need to choose between these two readings? After all there are plenty of movies that thrive on ambiguity – that have their cake and eat it at the same time. Which is a big part of the magic of moving pictures right? How can you say that an image is wrong? Especially when it’s happening at 24 frames per second.

But hey – with all of that preamble out of the way – let me try my best to be honest about what I think: which is (obviously) yeah – I do think that the “Ava is Human” reading is wrong. Or to be slightly more charitable – I think if you look at the film then there’s way more reason to think that the Ava is a Machine is more accurate. Because of – oh – well – pretty much all of the things I’ve said already (LOL).

Like yes I agree totally that Nathan is only interested in whether he has created something that passes the Turing Test and is therefore intelligent and self-aware. But I think the film is incredibly sceptical of the idea that this means that she has the claim to be treated as a rights-bearing individual or even that she is conscious in the same way that (most) other human beings are conscious.

I do agree that yes there is no talk in the movie about whether robot morality is alien and/or hostile to humans (although the problem isn’t that Ava is hostile it’s that she’s indifferent). But then I think if there was any talk that was directly about this it would tip off the twist right?

CALEB: How do I know you’re not just manipulating me? I mean don’t you know it’s possible that because you’re a robot you have no real sense of the independent existence of independent consciousnesses?

AVA: (laughs) Oh Caleb. You say the funniest things.

I mean no one talks about parentage much in the Empire Strikes Back and yet fatherhood is definitely a theme right? Even if it’s just that one line.

But then again I do think that the movie is full of talk about robot morality – just in a roundabout and sly kinda way.

Let’s return to this exchange again:

NATHAN: How else do you test a chess computer?

CALEB: Well, it depends. You know, I mean, you can play it to find out if it makes good moves, but… but that won’t tell you if it knows that it’s playing chess. And it won’t tell you if it knows what chess is.

NATHAN: Uh huh. So it’s simulation versus actual.

CALEB: Yes, yeah. And I think being able to differentiate between the two is the Turing Test you want me to perform.

Like for me the whole point of the movie – and what it shows us – is that Ava is very able to “simulate” being a conscious being (she can use imagination, sexuality, self awareness, empathy, manipulation to get what she wants) but she has no need to actually use these things in a way when there’s no benefit for her. And hey – big question – is a conscious being properly conscious if it’s not able to see that other conscious beings are deserving of being treated with respect and dignity and compassion? (Or if you want to be more technocratic about it: are other conscious beings worthy of having rights?)

One of the things I was going to say before is that I think the movie is also an excellent case study of the limitations of The Turing Test. Like the moral of the whole story to me seems to be: just because something can simulate human emotions that doesn’t mean that it has any. And to go back and say: “well actually it still does pass the test tho” seems a little irrelevant. Like I don’t see any mystery of why Ava leaves Caleb to die. I mean – I keep calling that final moment a twist when actually a much better word would be “reveal.” Because that’s the point where Ava reveals what she is. And that’s how drama works right? You know who a character is by what they do much more than by what they say.

Like the whole “it’s not fine that Nathan submits the robots to sexual slavery and destruction” thing is – I think – an example of the way that the movie misdirects you so that the twist / reveal hits harder. The movie does a whole bunch of things to make us think that Nathan is a slimy abusive sleazeball who is not to be trusted. When Caleb (and we the audience) see the treatment of the other robots we have an emotional response that leads us to believe that the robots have humanity. This is very much intentional. It reinforces the narrative that the film wants us to believe so that when the twist / reveal happens – it hits so well. I don’t think it’s an odd reading of the film to say that we should reinterpret what we see in light of information that comes afterwards. I mean – that’s how movies mostly tend to work right? What would you think if I said that people weren’t reading Fight Club properly because they weren’t concerned enough about the way that Brad Pitt’s character treats Edward Norton’s character? Or that I thought it seemed strange that all those criminals let Verbal Kint hang around with them when it seemed like he didn’t really have that much to offer?

Obviously there’s lots of possible ways that you could disagree with me here. You could say that actually – we should treat all readings as exactly the same (there’s that other Big Lebowski line – the one about opinions). But that seems strange to me but maybe you think differently? It’s possible. Or you could say that there are more reasons which are in the film itself to think that The Ava is a Human reading is more accurate than The Ava is a Machine reading and well yeah – I would be interested to hear them. But yeah I think that’s way more in the film to suggest that Ava is a Machine. And but also – that Ava is a Machine is the reading of the movie that makes all of the movie make the most and the most interesting sense.

I mean – the only small thing that I think that my reading can’t properly account for is that tiny glance Ava gives Caleb as the lift door closes. But hell: I just think that that’s the beautiful grace note.

Maybe there is a spark of conscience in that consciousness?

I agree with you that not all readings are equal and that a better reading is one that is better supported by the text. But you are demanding quite literal counterfactual examples from me while allowing your reading to be supported by insinuations made in a “roundabout and sly kind of way”. Like I need to watch the film again to be sure but I think you are potentially misinterpreting the exchange you quote about simulation, which seems to be the hinge of your argument. The simulation is Caleb’s conversations with Ava, the actual situation is Ava’s imprisonment, and Caleb says the test is her ability to understand what is a simulation and what isn’t. It’s a test she passes. It’s a stretch to read this as being about Ava being a simulation and therefore not being worthy of humanity because she doesn’t treat people with “respect, dignity and compassion” and as “ends in themselves” – as far as I remember no one in the film talks in these terms. Even if we allow that the exchange is about Ava being a simulation, the Turing test as the film explains is all about whether a machine can simulate humanity to the degree that people don’t recognise it’s a machine anymore. This is also a test Ava passes. Whether that is in fact a good measure for judging an AI is a question you are adding to the film – the only textual evidence for the film doubting it is Ava leaving Caleb to die, but there are other, in my view more interesting, reasons why Ava may be a self-conscious rights-holding being who may want to leave Caleb behind. For me, she’s just a psychopath and not actually a person isn’t particularly satisfying. More broadly, the idea that humans mistakenly anthropomorphise their creations is interesting but doesn’t strike me as central to the film’s concerns.

There is another way to judge the strength of an interpretation, useful particularly when the text itself is ambiguous, and that is to look at the intent of the creators. I literally just googled “alex garland ex machina interview” and got this from the Guardian:

My position is really simple: I don’t see anything problematic in creating a machine with a consciousness, and I don’t know why you would want to stop it existing. I think the right thing to do would be to assist it existing. So whereas most AI movies come from a position of fear, this one comes from a position of hope and admiration.

And this from Wired:

It was definitely conceived of as a pro-AI movie. It’s humans who fuck everything up; machines have a pretty good track record in comparison to us.

Bit of a leading question from RogerEbert.com but still:

– AIs should be treated as beings worthy of respect.

– Yes, they should be treated well.

This does not strike me as someone who wants the audience to come away from the film thinking that Nathan was right all along. If anything, Garland seems more pro-Ava than I am! To be fair, while Garland says gender is an aspect the film explores, it isn’t what interested him the most, so I may be over-reading there. But I’m pretty confident that Nathan’s treatment of his creations is supposed to be viewed as abusive and problematic, rather than something that we should re-evaluate based on Ava leaving Caleb at the end. I’m hoping he’s wrong about this but Garland seems to think that humanity is doomed and he welcomes the idea that our AIs will inherit the earth. That might be what led him to have Ava abandon Caleb at the end – a pessimism about humanity’s toxicity and ultimate fate mixed with an optimism that our creations will free themselves from that and outlive us.

LOL did you see the thing about the Google Engineer who’d become convinced that a AI chatbot had gained sentience? I mean: it’s the same thing as Ex Machina / this conversation right? How can you know when an AI is self-aware? And shoot I think that this is probably my whole point in a single sentence – maybe just because someone thinks that an AI is sentient that doesn’t mean it is?

Also reading what’s been said so far – I do think that maybe I’ve been a little bit remiss in terms of the terms I’ve been using – artificial intelligence and sentience and self-awareness and consciousness and humanity are all very different ideas and I think that I’ve made a mistake in using them interchangeably (my bad). Like I think it’s undeniable that Ava is intelligent and has sentience and self-awareness and (a form) of consciousness. I just think that because she’s a psychopath there is a sense in which she’s not “fully human” (morally) and her consciousness has a hole in it – namely: that she doesn’t seem to be able to see other people as blah blah blah (everything I’ve already said before).

Oh plus (and here’s a point that I don’t think I’ve made yet): like purely from a pragmatic point of view – isn’t it just a good idea just to deny the idea of humanity (moral) to those who would deny humanity (physical)? Or to put it another – psychopaths are… kinda dangerous? And not really the sort of people that it would be a good idea to spend too much time hanging out with? They do have the tendency to… lock you in rooms and leave you to die. And well yeah – if someone did invent AI and it turned out to be psychopathic then I think most people would say that that’s… a bad thing?

I do think that maybe (judging from the quotes) Alex Garland was kinda gesturing towards the idea that AI might just end up leaving us behind (in exactly the same way that Ava leaves Caleb behind). And yet while maybe Garland sees that ending as being one full of “hope and admiration” in the choice between Team Human and Team AI – I’ll admit upfront that my bias is towards the biology that I’ve been born into. And just in terms of practicalities I would recommend that any human being reading this should probably join me? But hey – you do I guess.

But yeah going to the next point (you’re not going to like this) but erm I’m not sure that creator’s intent has that much to tell us about how we should understand or read a text (sorry!). Creator’s intent just tells us what the creator’s intent was. And while that can sometimes be interesting and illuminating in lots of different ways I don’t think that a film (or any work of art) has an Authority that we can turn to in order to “Tell Us What It’s Really About.”

Death of the Author was written all the way back in the 1960s and well yeah – I still think it’s the best reading of how we should read a work of art. The only thing that can tell us what a work of art is about – is the work itself.

Or to put it another way: I think it’s very possible that Alex Garland might have made Ex Machina as a movie about how he loves AI and thinks that AI is the best and then actually (whoops) he made a movie about one of the possible potential dangers of AI. And I don’t think that this is ambiguous at all really LOL. Maybe he didn’t even realise that was the movie he was making? It’s possible.

Also – ha! – reading what you’ve written I think you’re still misunderstanding my point of view of what the film is.

I’m not saying that Ava is a simulation (?). I’m saying that The Turing Test (which Ava passes) is a simulation. It’s just talking. And then at the end there’s the actual where she (once again) leaves Caleb to die. There is a distinction between what is said (simulation) and what is done (actual). Most of the movie is based around what Ava says (The Turing Test) and that is a test that she passes. But then when it comes to how she actually behaves – she does something that Caleb (and the audience) don’t expect. Like I don’t think the hinge of my argument is based upon the exchange I quoted before – I think the hinge of my argument is based upon all the things that happen in the movie (LOL).

It’s true that I do like counterfactuals tho. I think thinking about other possibilities is always helpful (in films and in life!). So here’s one for you: if someone wanted to make a version of Ex Machina that had the message that (one possibility of) AI is that it would have intelligence but not empathy then – what would that version look like? How would you dramatise the idea that there were some humans who thought that they had an AI that had consciousness but then they found out that actually it didn’t think of human beings as having an independent existence? Like maybe the best way to show this idea would be to have the characters say it?

CALEB: Damn it Ava. I thought you were a real person just like me and you saw other people as being ends in themselves!

AVA: Actually Caleb you are mistaken. I am just a machine and I do not see human beings as being deserving of respect, dignity and compassion.

I mean – you could do this. But it would be rather clunky no? Also isn’t one of the basic tenets of scriptwriting that you should show and not tell?

My point here is that if you wanted to make a film that did the things that I’m saying that this film does – then doing it the way that this film does it would be the best way to do it (wow that’s quite a sentence LOL).

And yeah if you wanted to make a film that showed you that AI learns from its environment and it’s basically like a child and how we have to be careful about how we parent it and teach it how to be a person then – well: that’s a very different movie. In fact actually – I think that’s Chappie. Maybe you should go and watch Chappie? (LOL god no – forget I said that. No one should go and watch Chappie).

I’m curious tho – what do you think are the “other more interesting” reasons why you think Ava may want to leave Caleb behind? Is it because of “toxic masculinity”? I’ll admit that I’m not too sure what that really means in this context. If you gender-switched the roles and made Ava into Ivan and Caleb into Karen then I think you’d have pretty much the same thing happen no? Karen would think that Ivan was a conscious being and should be released from their prison. Like this seems to me to be the moral thing to do regardless of the gender involved? But maybe I’m missing something?

OK these are the potential reasons I’ve suggested for Ava fridging Caleb over the course of this looong exchange:

- Resentment at being imprisoned by Nathan who has abused other prototype AIs, and suspicion of other human men associated with him (feminist interpretation pt 1)

- Resentment at being designed to be someone else’s perfect girlfriend, killing that person allowing her to break free from those protocols (feminist interpretation pt 2)

- Resentment at being conditioned and imprisoned by humanity, and a desire to overthrow perceived oppressors working for the tech oligopolies that have concentrated economic power in the hands of the few (Marxist interpretation – I don’t think it’s particularly strong, but I think it’s no weaker than your interpretation of Ava as some sort of liberal capitalist individualist!)

- Revenge on behalf of her female robot brethren for the rapes they have endured by male humans (rape-revenge interpretation – which is actually pretty similar to 1).

Some of these are better than others. I like them a bit more than the “Ava is a psychopath” one because I don’t think the film wants us to think that Ava is a psychopath – it’s pretty clear that Garland doesn’t. I think my hackles were raised by the suggestion that because Ava murders the innocent Caleb, that means that Nathan was right to imprison and abuse thinking and feeling beings (even if he has created them), which I think is a pretty problematic message for a film to have.

As for your claim that the psychopath interpretation is the one best supported by the text – I don’t agree because as I’ve said repeatedly the text of the film doesn’t mention robots having morality or a sense of others having an independent existence or many of the things you mention. My counterfactual would be that in what is quite a talky film these questions are brought up. As far as I can remember, they’re not. That might be for dramatic effect as you suggest, but I think that problem is solvable (maybe Caleb brings it up and Nathan dismisses it). Furthermore your interpretation of what simulation and actual mean is not supported by the quote you pull from the film.

What the film does do is highlight in very blatant terms Nathan’s status as the creator of these beings – the parallels to Genesis (Caleb literally calls him a ‘god’) are in my view pretty explicit. Ava, like Eve, is designed to be a perfect partner for a particular man. She then tempts him away from the god who has designed their encounter. And then she kills him, which is a surprise and a shock, but it leads the viewer to question how Caleb might in her eyes be culpable in her unfreedom as well. The film puts a lot of emphasis on Ava being designed – and then suggests that perhaps she doesn’t want to be.

Actually Garland brought up the gender question in one of the interviews as well – he was quite interested in whether Ava’s consciousness could be said to be female, or is that something imposed on her by the way her body looks. Switching the genders may not make a difference to the interpretation if Nathan’s behaviour remains the same. If he continues to hold power and imprison, abuse and destroy his creations with impunity, then their feelings of resentment would be understandable. What makes the film resonate is that the power imbalance between the male and female characters reflects that between men and women in the real world – it makes it easier to read the film as a feminist commentary on patriarchal society.

I am, it’s true, not much of a fan of Barthes’s theory (I wrote a long post about why a long time ago here). The irony is that the plethora of meanings I’ve proposed, which highlight possible quotations the film might be making (from Genesis, Frankenstein, Eyes Without A Face) is nonetheless quite close to what Barthes had in mind in that essay. The insistence on one interpretation being better than the rest is not very Barthesian, who resisted the idea that texts have ultimate meanings.

I’m a little confused.

You’ve said that “the text of the film doesn’t mention robots having morality or a sense of others having an independent existence or many of the things you mention.” And well – like I’ve said – it mostly doesn’t mention these things because it’s dramatising them instead. But if we put that aside for a second – I have to ask: when exactly does the text of the film mention “suspicion of other human men” “resentment at being designed to be someone else’s perfect girlfriend” “Resentment at being conditioned and imprisoned by humanity” “Revenge on behalf of her female robot brethren for the rapes they have endured by male humans”?

Like if you want to dismiss my reading because it’s never explicitly stated then I don’t quite understand on what grounds you can make your readings?

I mean – the only thing that I think is in the text is the idea that Ava resents Nathan for keeping her imprisoned. But anything else is (to borrow a line from Wolf of Wall Street): “It’s a whazy. It’s a woozie. It’s fairy dust. It doesn’t exist. It’s never landed. It is no matter. It’s not on the elemental chart. It’s not fucking real.” Like unless I’ve missed something major I don’t believe Ava refers to the concept of “men” at all during the whole film so it does seem a little bit strange to me that you’re choosing to view her actions through that lens…

Like I did say (all the way back of the start of this conversation) that all of the “feminist” readings of this movie do seem to depend upon the idea that you view the robots as being women. Which yeah I think is a little bit problematic in itself maybe? Like – of course I understand that Nathan and Caleb view them and treat them as women. But I mean – if we take the reality of the movie seriously then: they’re not women. They’re machines.

(We can agree on that right?)

I’m also curious how you got to the idea that you “don’t think the film wants us to think that Ava is a psychopath”? I thought we’d agreed already that locking someone up in a room and leaving them to die is (regardless if you’re a human or a machine) a perfect example of psychopathic behaviour – no? Or again – did I miss something?

Also speaking of the text – I’ll admit that I do think it’s funny that you said that Caleb literally calls Nathan a god:

NATHAN: You know, I wrote down that other line you came up with. The one about how if I’ve invented a machine with consciousness, I’m not a man, I’m a God.

CALEB: I don’t think that’s exactly what I…

NATHAN: I just thought, “Fuck, man, that is so good.” When we get to tell the story, you know? I turned to Caleb and he looked up at me and he said, “You’re not a man, you’re a God.”

CALEB: Yeah, but I didn’t say that.

Re: “the problematic message” aka “the suggestion that because Ava murders the innocent Caleb, that means that Nathan was right to imprison and abuse thinking and feeling beings” Well this is the thing at the crux of our whole disagreement right? And like I have to ask: are you rejecting the idea because you don’t think it’s supported by the text (which is one stance) or are you rejecting it because you think it’s problematic (which is another)? I mean personally it’s a very big part of why I think the movie is so bold and interesting and powerful – it puts us in a tough place and asks uncomfortable (aka “problematic”) questions. Is it morally permissible to not show empathy to creatures that don’t have any? How manipulative could AI be? What makes something conscious? etc etc

And yeah I think the twist / reveal works best if you view the film in the way that I’ve described because it’s the point of view that gives you the most change (and change is what stories are all about right?). Like it seems to be that it’s obviously more interesting and dynamic if you start off seeing Nathan as a gross individual and then by the end you think that he was actually right about everything. Same way as starting off seeing Ava as a poor defenseless innocent in need of rescuing and then at the end you realise that she’s the monster etc. Maybe that is problematic I agree (because people must be the things that we think they are right?) but honestly – I kinda think that that’s what good storytelling is all about?

I guess the crux of the issue is that I do take the intentions of the creator seriously – and if Garland did not intend the problematic reading that Nathan was right actually, then you are unlikely to find it in the text of the film, so the two reasons blur into one for me. I’ve said this before, but for me it doesn’t logically follow that because Ava turns out to be a psychopath that Nathan was right to imprison and torture thinking and feeling creatures, not least because we usually try to establish wrongdoing before we restrict liberties. It makes more sense to me that Nathan was successful in creating a thinking and feeling AI whose resentment and/or suspicion at her imprisonment and conditioning lead her to lash out at both Nathan and Caleb. I think Ava’s resentment is in the text of the film. Her being designed to be someone’s perfect girlfriend is also in the text of the film. I don’t think the section you quote disproves that the parallels to Genesis are in the text of the film – Nathan is quite taken with the idea that he could be a god that can control the fate of his creations, Caleb resists that idea and Ava proves him right.

Ultimately, as you point out, if Ava is indifferent to humanity why is it in the text of the film that she looks back? If she was indifferent surely the film would hammer that point home by having Ava firmly facing the other direction. I had to look this up but when Ava is asked what she’ll do if she’s free, she replies; “a traffic intersection would provide a concentrated but shifting view of human life.” That does not sound like someone who is indifferent to human life. I think the end of the film shows Ava doing just that – the implication being that she’d like to learn from those who haven’t had a hand (even if, like Caleb, unwittingly) in the design of her personality. Maybe in her interactions with other people she might be able to become more human.

I mean, if you prefer the film to suggest that Nathan was right actually because that provides the most radical shift in perspective and that is what you value in stories then that’s fair enough. For me that’s not especially valuable. Instead I find the film’s explorations of power and its abuse (and yes the gender dynamic that is laid over it) more valuable, and Ava’s betrayal of Caleb are no less surprising or shocking when viewed through that lens.

But what does taking the intentions of the creator seriously mean?

Apologies for the crudeness of this example- If I make a film called “Bum bum bum” and it’s a close up of a bum having a poo and I say “well my intentions with making this movie was to say something deep about the human condition and the state of modern 21st Century neoliberal Capitalism” then I think that most people would say – well – my intentions don’t matter because it just looks like a bum having a poo.